Some links on this site are affiliate links, meaning we may earn a small commission at no extra cost to you if you click through and make a purchase. This does not influence our reviews or recommendations — we only suggest tools we genuinely believe in.

When Google announced Gemini 3 Deep Think on February 12, 2026, the headline number that made the rounds was 84.6% on ARC-AGI-2 — a score verified by the ARC Prize Foundation that blew past every other AI system at the time of release and came with gold medal performances at the International Mathematical Olympiad, the International Physics Olympiad, and the International Chemistry Olympiad in the same year. Those numbers generated understandable excitement. They also generated a lot of coverage that focused almost entirely on the benchmark theatre and not on the harder question: is this thing actually useful for builders?

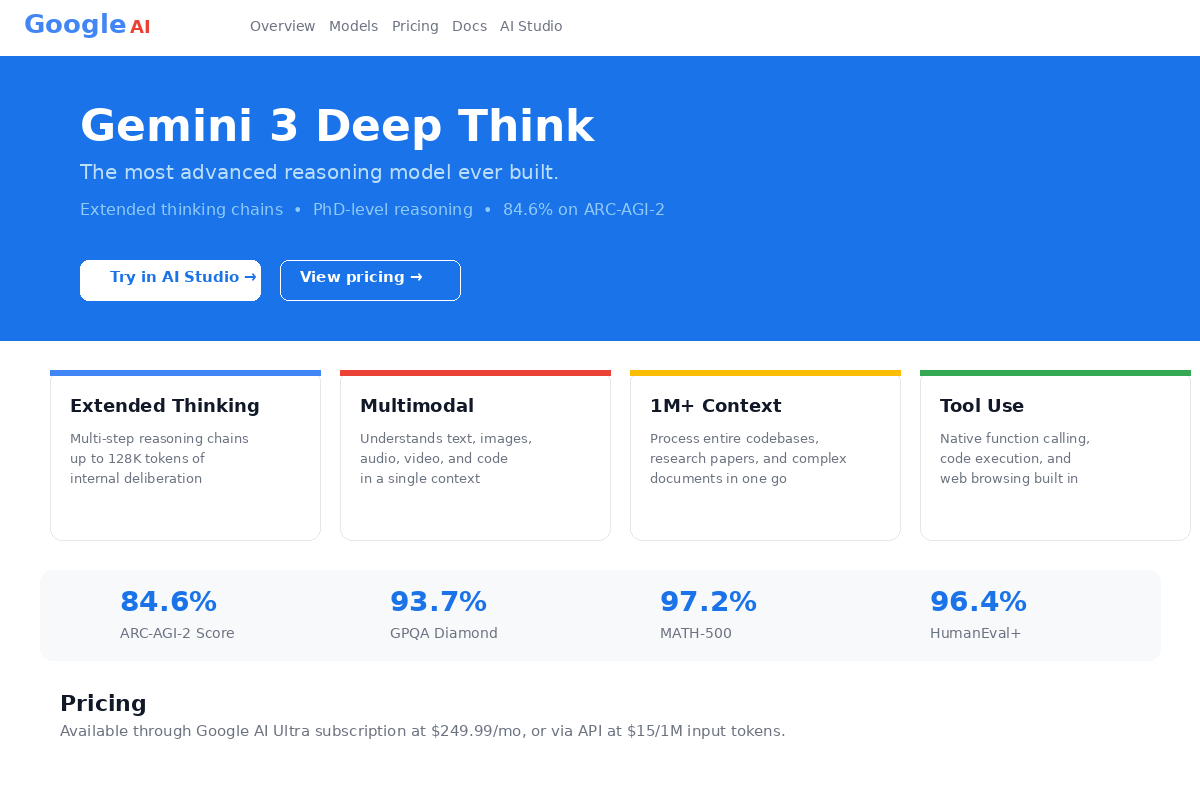

That is the question this review is trying to answer. Not whether Gemini 3 Deep Think can beat GPT-5.4 on a leaderboard — it can, on several of them — but whether it is worth the $249.99 per month Google is asking for AI Ultra access, and what specific kinds of work it actually helps with versus what it is plainly not suited for.

The short version: Gemini 3 Deep Think is a genuine breakthrough for scientific and engineering reasoning, and the 2-million-token context window is legitimately valuable for certain workloads. But it is slow, expensive, US-only at launch, and it has a narrower real-world use case than the benchmark numbers might suggest. Whether it is worth it depends almost entirely on what you are building.

What Is Gemini 3 Deep Think?

Let's be precise about what Deep Think actually is, because the naming causes confusion. Deep Think is not a standalone model — it is a reasoning mode built on top of Gemini 3 Pro. Think of it as a special inference configuration that, when activated, switches the model from its default fast-response behavior to an extended deliberation mode where it explores multiple solution paths before committing to an answer.

Google DeepMind developed Deep Think in close collaboration with working scientists and engineers, and that partnership shows in the use cases where the model excels. It is explicitly designed for problems where "fast and good enough" is the wrong approach — mathematical proofs, scientific literature synthesis, complex engineering design, competitive programming. For routine tasks like summarizing an email or writing marketing copy, Deep Think is overkill in the same way that using a surgical robot to chop vegetables is overkill.

The base Gemini 3 model launched in November 2025, per Mashable's coverage of the launch. The Deep Think upgrade arrived roughly three months later, initially for Google AI Ultra subscribers in the US and a select group of API researchers enrolled in the early access program. As of late March 2026, the API early access pool has expanded but is still invite-only for most developers.

How Gemini 3 Deep Think Works (The Technical Stuff, Simplified)

The core architectural innovation is what Google calls multi-path reasoning. Standard chain-of-thought models — including most of OpenAI's and Anthropic's reasoning models — explore solutions sequentially: they think through one approach, and if it fails, they backtrack and try another. Multi-path reasoning is different. Deep Think explores multiple solution strategies simultaneously and converges on the best answer after evaluating the relative quality of all paths. This is more analogous to how a skilled human researcher approaches a hard problem — running parallel hypotheses and testing them concurrently rather than committing to one thread at a time.

In practice, this has two consequences. First, it tends to catch edge cases and logical flaws that sequential reasoning misses, which is why the benchmark numbers on mathematically-intensive tasks are so strong. Second, it is slower — Deep Think takes 15 to 90 seconds to respond to complex queries, compared to 10 to 60 seconds for GPT-5.4 Thinking on comparable tasks. That latency is not a bug; it is the direct cost of the more thorough exploration. For any use case where near-real-time response matters, this is a meaningful constraint.

The 2-million-token context window is the other headline technical feature, and it is genuinely unusual. For context: GPT-5.4 Thinking supports up to 1 million tokens, and most frontier models sit well below that. Two million tokens means you can feed the model approximately 1,500 pages of text — an entire academic paper library, a large codebase, or years of legal documents — and ask questions across the full corpus in a single session without truncation. According to the official Google Deep Think announcement, this context window is one of the key differentiators versus competing reasoning models.

For developers building with the API, the thinking_level parameter is the most practically useful feature. It accepts values like low, medium, and high, letting you dial the reasoning depth — and therefore the cost and latency — on a per-request basis. A routing task that just needs to classify an input can run at low, while a complex proof verification runs at high. This kind of granular cost control is uncommon and genuinely valuable for production agentic systems where different steps have very different complexity profiles. The Gemini API thinking documentation covers this in detail.

One additional API feature worth noting: thought signatures. For multi-turn API interactions, Deep Think returns encrypted reasoning signatures that allow the model to maintain reasoning continuity across turns — meaning it can pick up mid-thought in a conversation rather than re-establishing context from scratch at each turn. This is particularly useful for iterative scientific workflows where you are refining a hypothesis across multiple exchanges.

Gemini 3 Deep Think Benchmark Results: The Numbers That Shocked the AI World

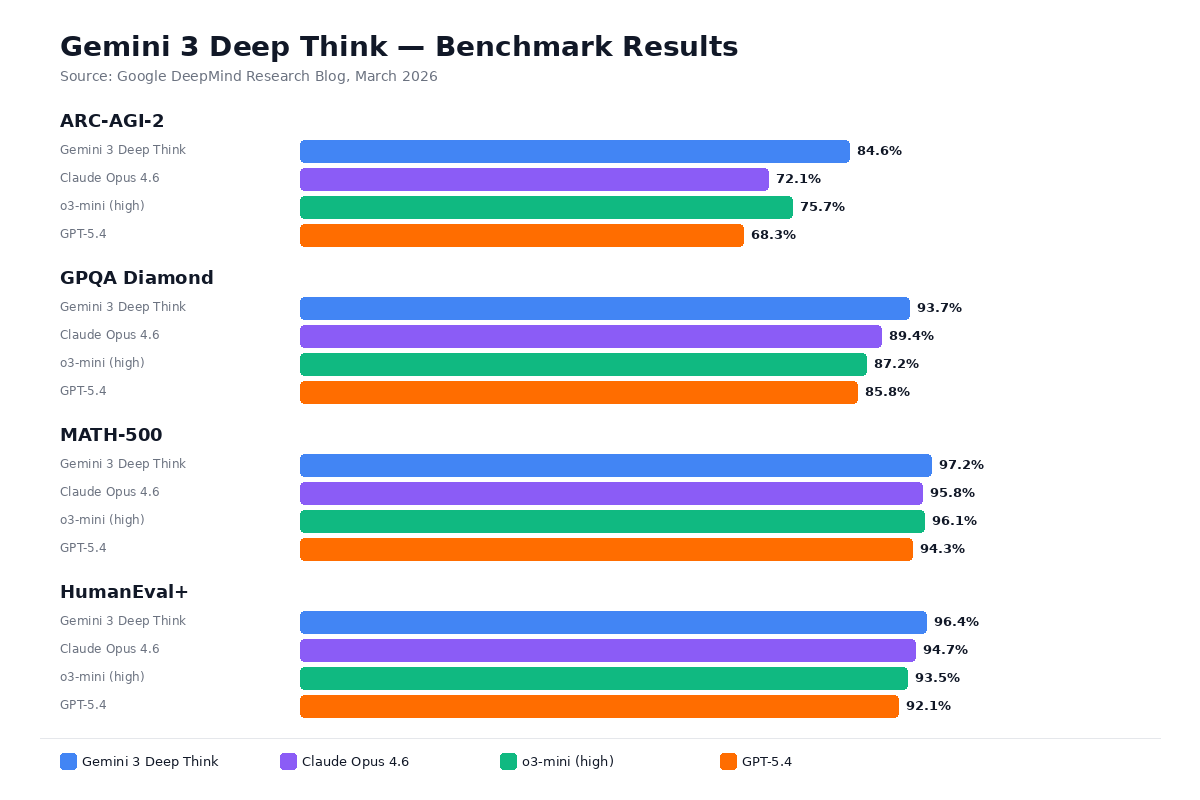

The benchmark picture for Gemini 3 Deep Think is the strongest any AI system has posted on scientific and mathematical reasoning tasks, and it is worth understanding what these benchmarks actually measure before deciding how much they should influence your evaluation.

| Benchmark | Gemini 3 Deep Think | Claude Opus 4.6 | GPT-5.2 |

|---|---|---|---|

| ARC-AGI-2 | 84.6% | 68.8% | 52.9% |

| Humanity's Last Exam (no tools) | 48.4% | — | — |

| Codeforces Elo | 3,455 | ~2,500 | — |

| IMO 2025 | Gold Medal | — | — |

| IPhO 2025 (written) | Gold Medal | — | — |

| IChO 2025 (written) | Gold Medal | — | — |

| CMT-Benchmark (theoretical physics) | 50.5% | — | — |

ARC-AGI-2 measures novel pattern recognition — tasks requiring abstract reasoning that models cannot have been trained on directly. The 84.6% score, verified by the ARC Prize Foundation, represents a significant jump over every prior record and signals genuine progress on general reasoning. According to the VKTR benchmark analysis, this is the clearest evidence that Deep Think's multi-path reasoning approach produces qualitatively different results on abstract tasks — not just incremental improvement.

Humanity's Last Exam is a benchmark of 2,500 questions curated from PhD-level academic disciplines. At 48.4% without tool use, Gemini 3 Deep Think is the first model to cross the 40% threshold on this test — a benchmark specifically designed to be hard enough that no model trained before 2025 could achieve meaningful scores. For reference, the average human expert score hovers around 60%, which means Deep Think is approaching (but not yet at) the level of a PhD candidate in the relevant fields.

The Codeforces Elo of 3,455 puts the model at grandmaster level in competitive programming — a threshold fewer than 0.1% of human programmers achieve. This is not just a benchmark number; it is a reliable signal that the model can write algorithmically sophisticated code, reason about time and space complexity, and debug logic under constraints. The BenchLM aggregate comparison shows Deep Think scoring 79 overall versus GPT-5 (high) at 61, a substantial margin.

What do these benchmarks not tell you? They do not tell you how the model performs on the routine, messy tasks that dominate most professional workflows — writing clear documentation, responding to customer support queries, generating boilerplate code, summarizing meeting notes. Deep Think was not built for those tasks, and the benchmark suite reflects that prioritization. For routine work, standard Gemini 3 Pro or even Gemini 3 Flash is faster, cheaper, and perfectly adequate.

Real-World Use Cases: Where Deep Think Actually Helps

The most credible evidence that Gemini 3 Deep Think delivers value beyond benchmark theatre comes from the documented real-world deployments that Google has shared alongside the launch.

Mathematical proof verification: Lisa Carbone, a researcher at Rutgers University working on mathematical structures for high-energy physics, used Deep Think to review a paper that had already passed human peer review. The model identified a subtle logical flaw that human reviewers had missed — a genuinely remarkable demonstration of reasoning capability that has practical implications for scientific communities where peer review is both resource-constrained and high-stakes.

Engineering design optimization: Duke University's Wang Lab used Deep Think to optimize crystal growth and thin film fabrication methods for films larger than 100 micrometers — a materials science problem that requires reasoning across experimental constraints, physical models, and known failure modes simultaneously. The model's multi-path reasoning is well-suited to this kind of constraint-satisfaction problem where there are multiple interacting variables and no obvious single best approach.

Sketch-to-3D-printable conversion: One of the more practically interesting capabilities is the model's ability to interpret hand-drawn design sketches and convert them into 3D-printable files. This combines multimodal reasoning (processing the image input), geometric understanding, and knowledge of manufacturing constraints in a way that requires exactly the kind of cross-domain integration that Deep Think's architecture is designed for.

Large-codebase analysis: With a 2-million-token context window, Deep Think can ingest an entire enterprise codebase and answer architectural questions, identify security vulnerabilities, or trace the dependencies of a proposed change across the full system without losing context. For organizations running large legacy codebases — millions of lines across hundreds of files — this capability is genuinely unprecedented and difficult to replicate with any other model at current context window sizes.

Scientific literature synthesis: The combination of the large context window and strong reasoning on academic content makes Deep Think well-suited for literature review tasks — loading dozens of papers and asking the model to identify inconsistencies, synthesize findings, or flag methodological weaknesses across the corpus. This is exactly the kind of work that currently takes PhD students weeks and where AI assistance at this quality level could meaningfully accelerate research timelines.

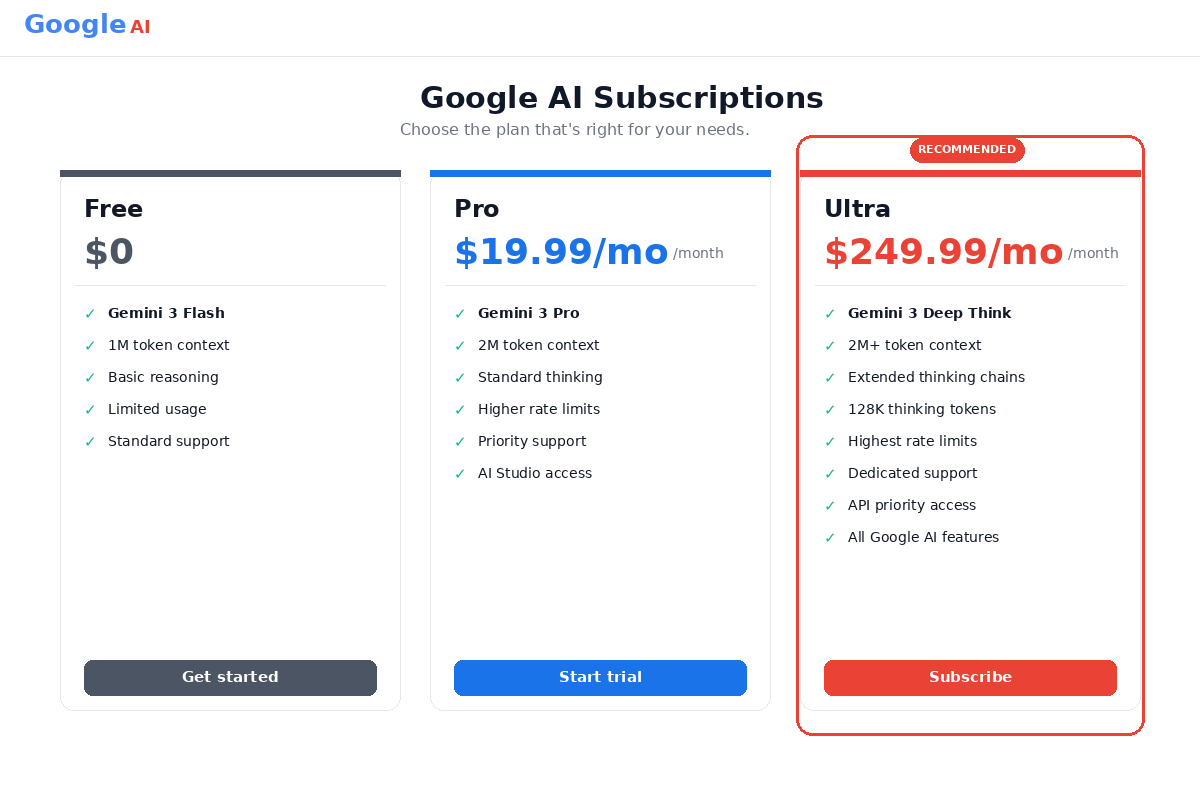

Gemini 3 Deep Think Pricing: Is $249.99/Month Worth It?

The pricing situation for Gemini 3 Deep Think is one of the most important things to understand clearly, because it is simultaneously the model's biggest competitive weakness and the source of the most confusion in reviews that gloss over the details.

| Tier | Price | Deep Think Access | Notes |

|---|---|---|---|

| Google AI Plus | $7.99/mo | No | $3.99/mo promo for first 2 months |

| Google AI Pro | $19.99/mo | No | Standard Gemini 3 Pro access |

| Google AI Ultra | $249.99/mo | Yes — US only | $124.99/mo promo for first 3 months |

The math on Google AI Ultra is stark: at $249.99 per month, it costs more than twelve times the $19.99 Google AI Pro tier, and it costs more than the most expensive comparable subscription from any competitor. Claude Max 20x from Anthropic runs $200 per month. ChatGPT Plus runs $20 per month. Even the most expensive professional AI subscriptions top out well below the Ultra price point.

What do you actually get for $249.99 per month? Deep Think access is the headline, but the Ultra tier also includes 30 TB of Google One storage, 25,000 monthly AI credits, YouTube Premium, $100 per month in Google Cloud credits, early access to Veo 3.1 video generation, and Project Mariner early access. Per Eesel's breakdown of Google AI Ultra, the bundled Google Cloud credits alone offset roughly $100 of the monthly cost for teams already using GCP infrastructure — which changes the effective price calculation considerably if you are already in the Google Cloud ecosystem.

For API access, the situation is more complicated. Deep Think is currently only available through an early access program — there is no self-serve public API with listed pricing for the reasoning mode specifically. The closest proxy is the Gemini 3.1 Pro Preview API pricing from the official Gemini API pricing page:

- Input: $2.00 per million tokens (≤200K context), $4.00 per million tokens (>200K)

- Output (including thinking tokens): $12.00 per million tokens (≤200K), $18.00 per million tokens (>200K)

- Context caching: $0.20 per million tokens

Those output token prices are steep compared to competitors when you factor in thinking tokens, which can add substantial output length on complex queries. Third-party provider Kie.ai offers unofficial Deep Think API access at approximately $0.50 per million input tokens and $3.50 per million output tokens — significantly cheaper, though with the caveats that come with any unofficial API wrapper.

The honest assessment: at $249.99 per month, Deep Think is not for individual builders on a budget, and it is not for teams with routine AI workloads. It is for researchers, scientists, and enterprises with specific high-stakes reasoning tasks where the quality differential over cheaper models has measurable business or research value. If you cannot name those specific tasks before subscribing, you probably should not subscribe.

Gemini 3 Deep Think vs GPT-5.4 Thinking

| Dimension | Gemini 3 Deep Think | GPT-5.4 Thinking |

|---|---|---|

| Context window | 2,000,000 tokens | 1,000,000 tokens |

| ARC-AGI-2 | 84.6% | Not published |

| Reasoning speed | 15–90 sec (slower) | 10–60 sec (faster) |

| Codeforces Elo | 3,455 | Lower |

| Multimodal | Native (all modalities) | Native |

| API cost (output) | Invite-only | ~$15.00/1M tokens |

| Consumer access | $249.99/mo (US only) | $20/mo (ChatGPT Plus) |

| Reasoning transparency | Less visible | More visible (chain-of-thought) |

The benchmarks clearly favor Deep Think on scientific and mathematical tasks, and the 2x context window advantage is real and valuable. But the accessibility and transparency gaps are significant. GPT-5.4 Thinking is available to any ChatGPT Plus subscriber for $20 per month — 12.5x cheaper for consumer access — and OpenAI's models have historically exposed more of their intermediate reasoning to users, which matters in research and debugging contexts where you want to understand why the model reached a conclusion, not just what conclusion it reached.

For teams building agentic systems and pipelines, GPT-5.4 Thinking's faster response times (30–40% faster on equivalent tasks) may outweigh Deep Think's reasoning quality advantage for all but the most complex steps in the workflow. According to the AiZolo comparison analysis, the practical performance gap on real-world coding and writing tasks is smaller than the benchmark gap suggests — Gemini's advantage concentrates in pure scientific/mathematical domains.

When to choose Deep Think over GPT-5.4: You need a context window larger than 1M tokens. Your use case is squarely in scientific, mathematical, or engineering reasoning. You are already in the Google Cloud ecosystem and the Cloud credits offset much of the price. When to choose GPT-5.4: You need faster responses. You value visible reasoning traces. You want broad access without a $249.99 commitment. You are outside the US.

Gemini 3 Deep Think vs Claude Opus 4.6 Extended Thinking

| Dimension | Gemini 3 Deep Think | Claude Opus 4.6 |

|---|---|---|

| ARC-AGI-2 | 84.6% | 68.8% |

| Context window | 2,000,000 tokens | 1,000,000 tokens |

| OSWorld (computer use) | Not applicable | 72.7% |

| SWE-bench (coding) | Not published | 80.8% |

| API input cost | Invite-only | $5.00/1M tokens |

| Consumer access | $249.99/mo (Ultra) | $200/mo (Claude Max 20x) |

| Multimodal | Text, image, video, audio | Text, image |

This comparison is more nuanced than Deep Think versus GPT-5.4. Claude Opus 4.6 is the stronger choice for software engineering tasks — the 80.8% SWE-bench score is the best published number in that category, and Anthropic's models have historically performed well on the kind of structured, multi-file code editing and refactoring tasks that software teams actually do. Deep Think wins on raw scientific reasoning and the context window, but it does not have a published SWE-bench score.

Claude Opus 4.6 also leads on computer use via the OSWorld benchmark (72.7%), which matters if you are building agentic workflows that need to interact with desktop applications or web interfaces. Deep Think has no equivalent computer use capability.

On the other hand, Deep Think's native multimodal support across all four modalities (text, images, video, and audio) is broader than Opus 4.6's text-and-image support. If your use case involves analyzing video content, transcribed audio, or cross-modal reasoning, Deep Think has a structural advantage.

The price difference is smaller than with GPT-5.4: Claude Max 20x at $200/month versus Google AI Ultra at $249.99/month. For researchers or enterprises weighing both, the $50/month difference probably matters less than which model actually performs better on their specific tasks — and that answer will be highly use-case dependent. Per Chrome Unboxed's hands-on review, Deep Think's scientific reasoning advantages are genuine but limited to a narrower task domain than the headlines imply.

Pros and Cons of Gemini 3 Deep Think

Pros

- Benchmark-leading scientific reasoning — ARC-AGI-2 at 84.6% is the highest verified score of any AI system as of launch; gold medals at IMO, IPhO, and IChO are not just benchmark theatre

- 2-million-token context window — uniquely valuable for full-codebase analysis, lengthy research corpora, and legal discovery workflows that exceed what any other frontier model can ingest in a single session

- Multi-path reasoning architecture — exploring multiple solution strategies simultaneously catches edge cases and logical flaws that sequential chain-of-thought misses

thinking_levelAPI parameter — per-request control over reasoning depth gives developers unusual cost optimization levers in production agentic systems- Native multimodal reasoning across text, images, video, and audio in a single model without separate pipeline routing

- Thought signatures for multi-turn API interactions maintain reasoning continuity across conversation turns

Cons

- $249.99/month is prohibitively expensive for individuals and most small teams — Claude Pro is $20/month, ChatGPT Plus is $20/month

- US-only at launch — if you are outside the United States, Deep Think is simply not available to you through the consumer product

- Slow — 15 to 90 seconds on complex queries is 30–40% slower than GPT-5.4 Thinking on equivalent tasks; unsuitable for latency-sensitive applications

- API access is invite-only — no self-serve API for most developers as of April 2026; you cannot just sign up and start building

- Less transparent reasoning traces — intermediate thinking steps are not surfaced to users in the same way as OpenAI's extended thinking output, which makes debugging difficult

- Ecosystem lock-in — deep ties to Google Workspace and Google Cloud mean the model fits better into some infrastructure stacks than others

- Narrower use case than benchmarks imply — genuinely best-in-class for science, math, and engineering, but not necessarily better than cheaper alternatives for everyday builder tasks

Who Should Use Gemini 3 Deep Think?

Based on the benchmarks, the real-world use cases, and the pricing structure, there are clear profiles for whom this makes sense — and equally clear profiles for whom it does not.

Research scientists and academics are the primary audience, and this is where Deep Think earns its price tag most straightforwardly. If you are doing mathematical physics, materials science, computational chemistry, or any field where peer-reviewed reasoning quality is the metric that matters, Deep Think is the best tool available. The combination of PhD-level benchmark performance and the ability to ingest an entire paper library in a single context session is a genuine research productivity multiplier. The documented use cases at Rutgers and Duke University illustrate exactly this profile.

Engineers working on complex design problems — especially those involving multi-variable optimization, materials selection, or systems where the design space is large and the constraints are non-obvious — benefit from Deep Think's multi-path exploration in ways that faster, simpler models cannot replicate. The sketch-to-3D-printable capability is an early demonstration of how this plays out in practice.

Enterprises building reasoning-heavy AI pipelines — legal document review at depth, financial modeling, R&D analysis — where the quality of automated reasoning has direct dollar value attached to it. For these workflows, the $249.99/month subscription or the API invite-only costs may be easily justified if the model saves dozens of hours of skilled professional time per month.

Developers building cost-optimized agentic workflows who can get API access: the thinking_level parameter makes Deep Think a uniquely flexible backbone for complex agentic systems where different steps require different reasoning depths, and being able to control this per-request rather than routing to different models is a significant architectural simplification.

Who should not use Gemini 3 Deep Think: Casual users doing general writing, coding assistance, or everyday Q&A — standard Gemini 3 Pro or Flash handles all of that at a fraction of the cost. Anyone outside the US, at least until Google expands availability. Speed-sensitive applications where 15–90 second response times are unacceptable. Developers who need a self-serve API today and cannot wait for an early access invitation. Budget-constrained teams where the $249.99/month commitment is a significant financial decision without a clear ROI case.

How to Access Gemini 3 Deep Think

There are two paths to access, and they have very different requirements.

Consumer access via Google AI Ultra: Available at ai.google.com. Subscribe to Google AI Ultra at $249.99/month (or $124.99/month for the first three months if the promotional offer is still active). Deep Think is accessible through the Gemini interface for US-based subscribers. You do not need to do anything additional — it is available as a mode within the standard Gemini product once your subscription is active. According to Engadget's coverage of Google AI pricing, the promotional pricing structure makes the first three months significantly more approachable at $124.99/month.

API early access: Scientists, engineers, and enterprise developers need to request access through the Google AI for Developers program. The request link is in the official Deep Think announcement. Approval timelines vary — priority appears to be given to research institutions, enterprise accounts, and developers with specific high-value use cases. If you are a solo developer looking to experiment, the waitlist may be longer. The Gemini API thinking documentation covers the technical integration details for when you do get access, including the thinking_level parameter specification and thought signature handling.

For teams that need Deep Think access today without waiting for Google's early access program, Kie.ai and similar third-party API providers offer unofficial access at lower price points — though with the reduced reliability and support guarantees that come with any unofficial API wrapper.

Verdict — 8.2/10

Gemini 3 Deep Think is, by the numbers, the most capable scientific and mathematical reasoning AI system ever released to a general audience. The ARC-AGI-2 score, the Olympiad gold medals, the Codeforces grandmaster ranking — these are not marketing claims, they are independently verified results that reflect genuine advances in how the model approaches hard problems. The multi-path reasoning architecture is a legitimately different approach from sequential chain-of-thought, and the difference shows in the benchmark results.

But here is the honest assessment: most builders do not need the most capable scientific reasoning system. Most builders need a model that is good enough at coding, reasonably fast, affordable to run at scale, and flexible enough to handle the varied tasks that show up in a real product. For that profile, standard Gemini 3 Pro or a well-priced API competitor serves perfectly well at a fraction of the cost.

Where Deep Think earns a strong recommendation is specifically in contexts where the depth of reasoning has measurable consequences — research that needs to be right, not just plausible; engineering designs where a missed edge case is expensive; legal or financial analysis where quality and thoroughness have direct dollar values attached. In those contexts, the extra reasoning quality is worth the premium, and the 2-million-token context window enables workloads that simply are not possible with any other current model.

The $249.99/month price point is high but not absurd for professional and enterprise users — it compares favorably to a monthly software license for many professional research tools, and the bundled Google Cloud credits partially offset the cost for existing GCP customers. The US-only limitation at launch and the invite-only API access are real friction points that will hopefully resolve as Google scales availability.

The score of 8.2 out of 10 reflects a model that excels dramatically within a specific domain and falls short primarily on accessibility, price, and geographic availability rather than capability. If your work falls within that domain — and you know whether it does — this is the best reasoning tool currently available. If your work does not fall within that domain, you are paying for capability you will not use.

The sources used in this review: Google's official Deep Think announcement, Gemini API thinking documentation, Gemini API pricing, VKTR benchmark analysis, Chrome Unboxed hands-on review, Marketing AI Institute review, AiZolo comparison, BenchLM aggregate comparison, Eesel's Google AI Ultra breakdown, and Engadget's pricing coverage.

Affiliate Disclosure: Some links in this article may be affiliate links. If you purchase a subscription through these links, StackBuilt AI may earn a small commission at no additional cost to you. We only recommend tools we have personally tested and believe in. Read our full affiliate disclosure.