I have been watching the AI video space go absolutely sideways over the past year or so, with new models dropping all the time and really – not often being impressed with what I saw. However, Will Smith eating spaghetti renderings are a little better (less funny) these days. The models seem to be coming out faster than most people can actually test them, and then suddenly we ended up with two Chinese (I know … I know) AI video tools – Seedance 2.0 and Kling 3.0 – both making very loud claims about being the best thing out there, I was not sure what to think at first because both of them are genuinely impressive in ways that are hard to dismiss. I also have strict guidelines in my professional life about Chinese tech so the hairs on my neck always go up out of habit.

Despite my hesitations with the origination of the two models – this Seedance 2.0 vs Kling 3.0 comparison is worth your time because these two aren’t just two similar products competing on the same feature set. They are actually built around totally different philosophies (one more directorial, one more production-ready), which means the “better” one really does depend on what you are trying to do, and puts you in the driver seat to pick the tool that fits your needs.

Here is What Makes This Comparison Interesting

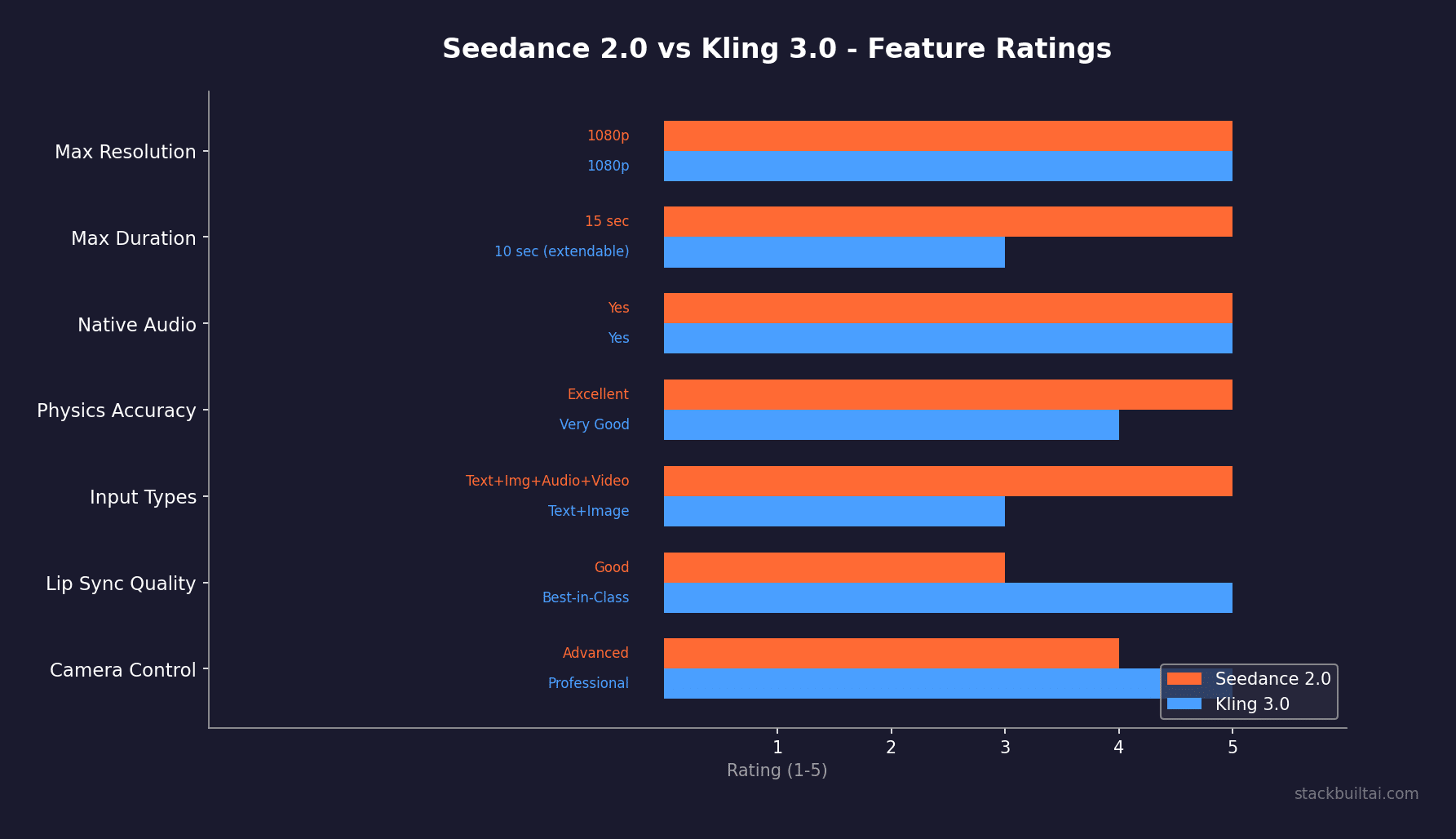

If you looked at their spec sheets side by side, you might be thinking these tools are basically the same thing – because both Seedance 2.0 and Kling 3.0 generate up to 15-second clips, include native audio generation, handle real-world physical motion, and both are competing for the same core audience of video creators, marketers, and filmmakers who want AI to do the heavy lifting on production.

Once you are under the hood though the approaches are very different. The gap between these two tools is really a story about two different bets on what the future of AI video creation looks like – kinda like VHS and Betamax – and the answer to which one you should use is really more specific to your workflow than most comparison articles will admit.

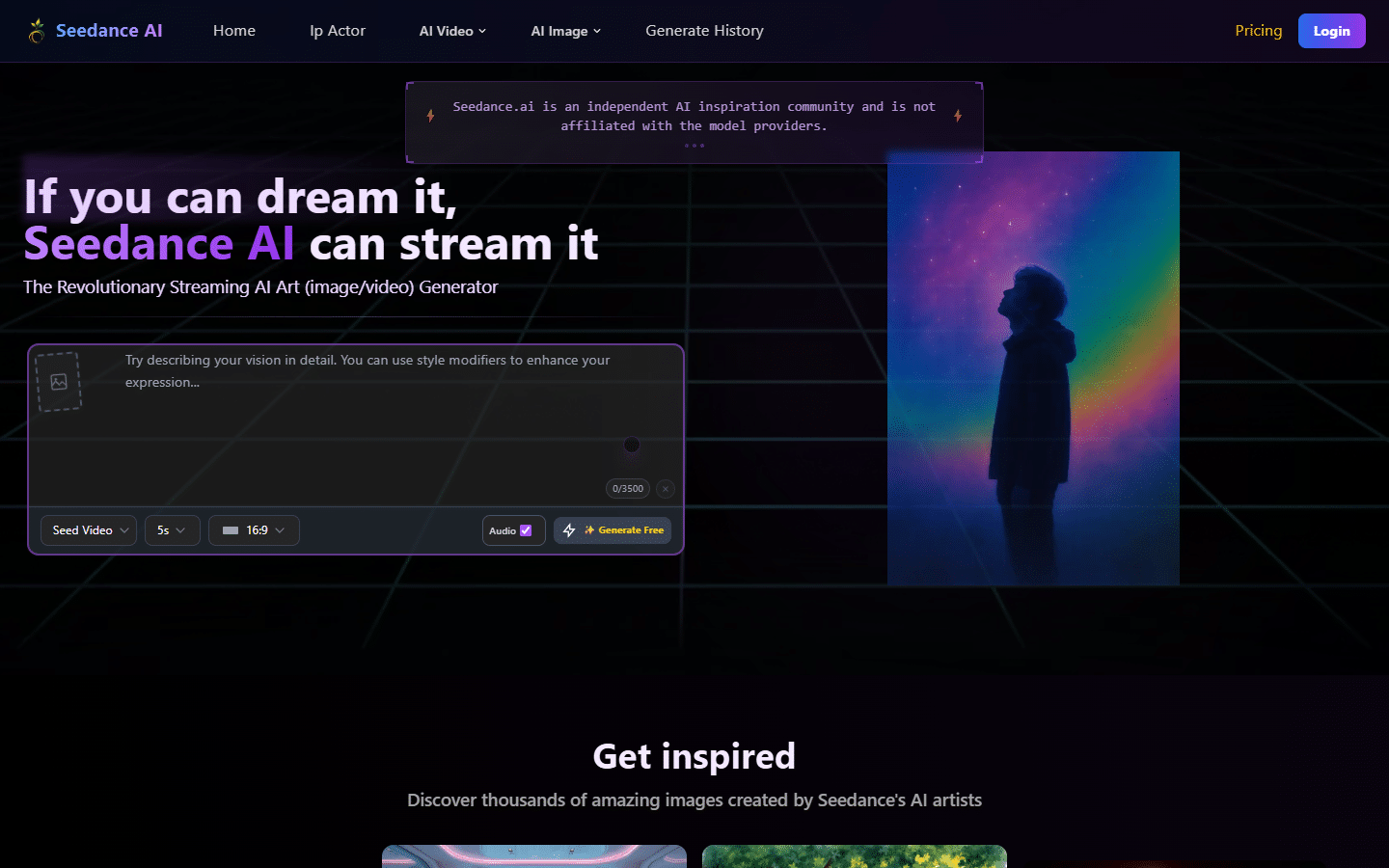

Seedance comes from ByteDance (the company behind TikTok), which means they have an enormous trove of video data to work with and serious infrastructure behind the product. Kling comes from Kuaishou, which is another Chinese tech giant that has been quietly building one of the more polished AI video pipelines in the industry for the past couple of years – just not with the name brand recognition that you get with ByteDance. Both companies have the resources and training data to compete at the top of the market, and both are clearly doing so.

What Seedance 2.0 Brings to the Table

One thing that quickly caught my attention with Seedance is its multimodal architecture, because it is not just a text-to-video tool. It accepts text, image, audio, and video inputs simultaneously, which opens up a kind of directing workflow that most other tools simply do not offer right now, and for creators who have spent time fighting with text-only prompts to get a consistent visual style, that difference is not a small thing. Think of this as prompting on steroids.

Multimodal Inputs and Reference Control

You can upload up to nine images, three video clips, and three audio clips as references all at once, which, if you have ever tried to get consistent visual style out of a text-only prompt, you understand why that matters. Text descriptions of visual aesthetics are inherently challenging and inconsistent in a way that actual reference materials are not – which sounds obvious when you say it out loud, but the tools that actually act on it are rarer than you might expect – the tech is evolving quickly. You describe “warm cinematic lighting” in text and you get fifteen different interpretations. You upload a reference frame and you get something that actually matches what you were picturing – and you can lock that lighting in for future use because you have a concrete reference.

The physics accuracy is where Seedance has been getting the most attention from the press and the creative community. Complex interactions between things on screen, motion stability, real-world physical laws applied to things like water, fabric, and structural collapses – that all get rendered with a level of specificity that is really impressive. Forbes covered it noting that it “nails real world physics and hyper-real outputs,” which is not the kind of language you throw around casually in a technology review. I see this stuff and get so excited for what is to come for movies and video games and the blending of the two with AR/VR.

The CNN coverage went further and described it as “so good it spooked Hollywood,” and there was actually a period where Disney accused ByteDance of using proprietary IP to train the model. I will be honest and please forgive me here but I am pretty happy to hear that Hollywood is spooked, the drop off in decent movies in the last 10 years is staggering but I digress… That is the kind of problem you only have if your outputs are genuinely convincing at a professional level – and while the copyright situation is something I will come back to, the quality signal there is real and only improving (scary again for Hollywood).

Camera and Performance Control

Seedance gives you actual director level control from camera movement control, lighting direction, and what they describe as performance direction (the ability to influence not just what happens in a scene but how it is being shot – crazy). That is a meaningful and quantum step toward giving creators actual directorial control rather than just prompt-guessing-games – AND it is one of the reasons advanced users tend to find Seedance more exciting once they get past the initial learning curve.

The 15-second clips come with synchronized native audio, so you aren’t doing post-production audio matching after the fact. Access through the Loova platform with unlimited generation for subscribers makes it reasonably approachable for people who want to experiment heavily without watching a credit counter drain during a creative session. Audio is a critical and often underappreciated component to filming – only identified once you try to film something yourself and it feels off – it’s the audio.

What to Know Before You Commit

The public availability is still limited compared to Kling at this time, the benchmark claims are mostly from ByteDance’s own internal testing rather than independent audits, and the copyright situation around the training data is a bit murky in a way that could matter depending on what you are making and for whom. For casual experimentation that is probably fine – and I do think the tool is worth experimenting with. For high-stakes commercial production, I think it is a real variable worth thinking through. With a lot of AI (and crypto defi) products – this will probably have to be a consideration going forward until regulations etc get worked out. We’re living in the wild wild west.

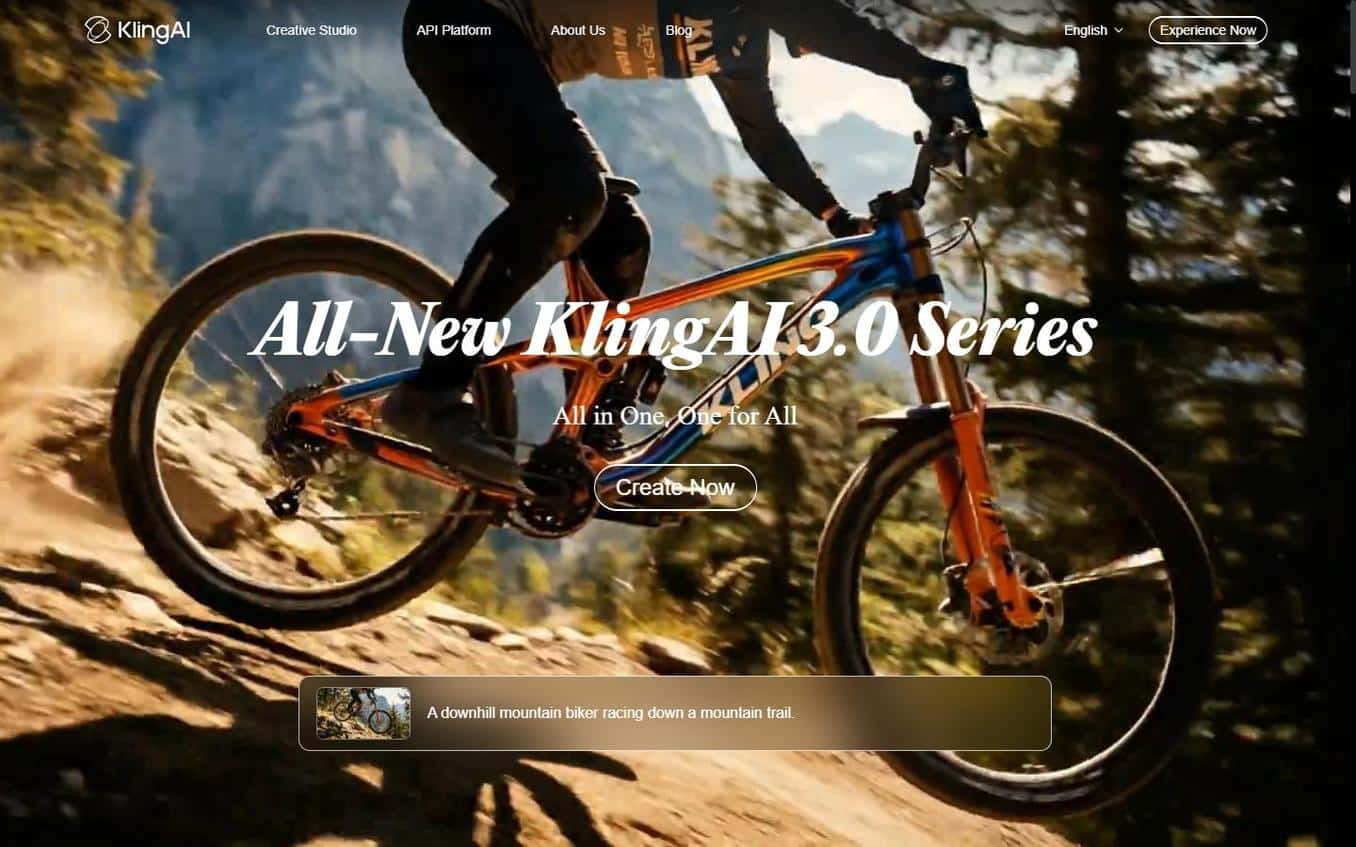

What Kling 3.0 Brings to the Table

Kling has also been doing something impressive for a while now, and with version 3.0, Kuaishou Kling has made a serious push to build the most production-ready AI video tool available to the general public (big difference from Seedance). The results have made people sit up and take notice – and the external endorsements are not coming from the company’s own marketing materials.

The Free Tier That Actually Works

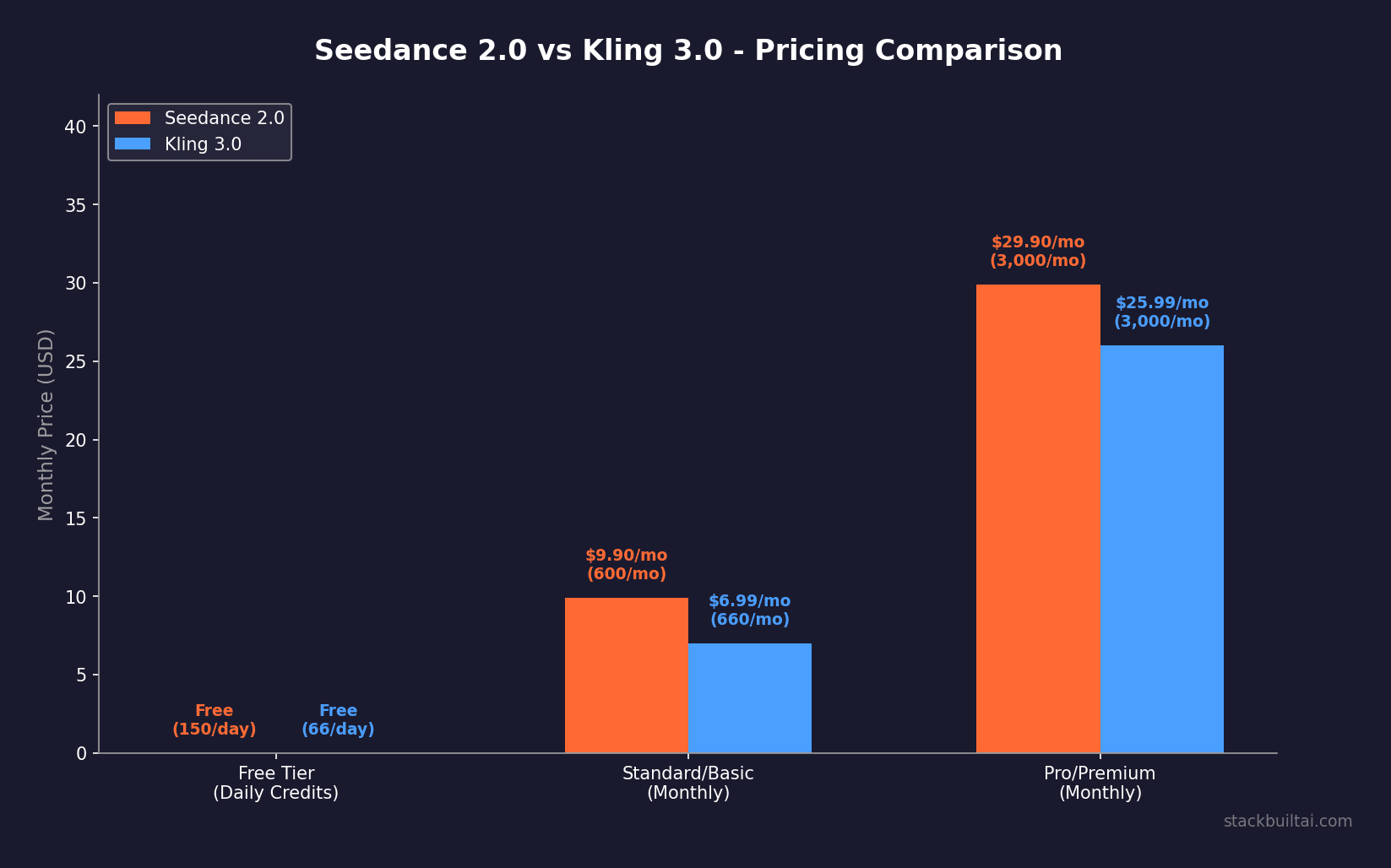

The thing I keep coming back to with Kling is the pricing structure, because the free tier gives you 66 credits per day with no credit card required. That is the most generous free tier in AI video by a considerable margin, and it means anyone can actually test whether this tool works for their specific use case before spending a dollar, which (given how varied people’s production needs are) matters a lot more than it might sound on paper. Giving legitimate access at the free tier is a great way to actually bring people in to the paid tiers later.

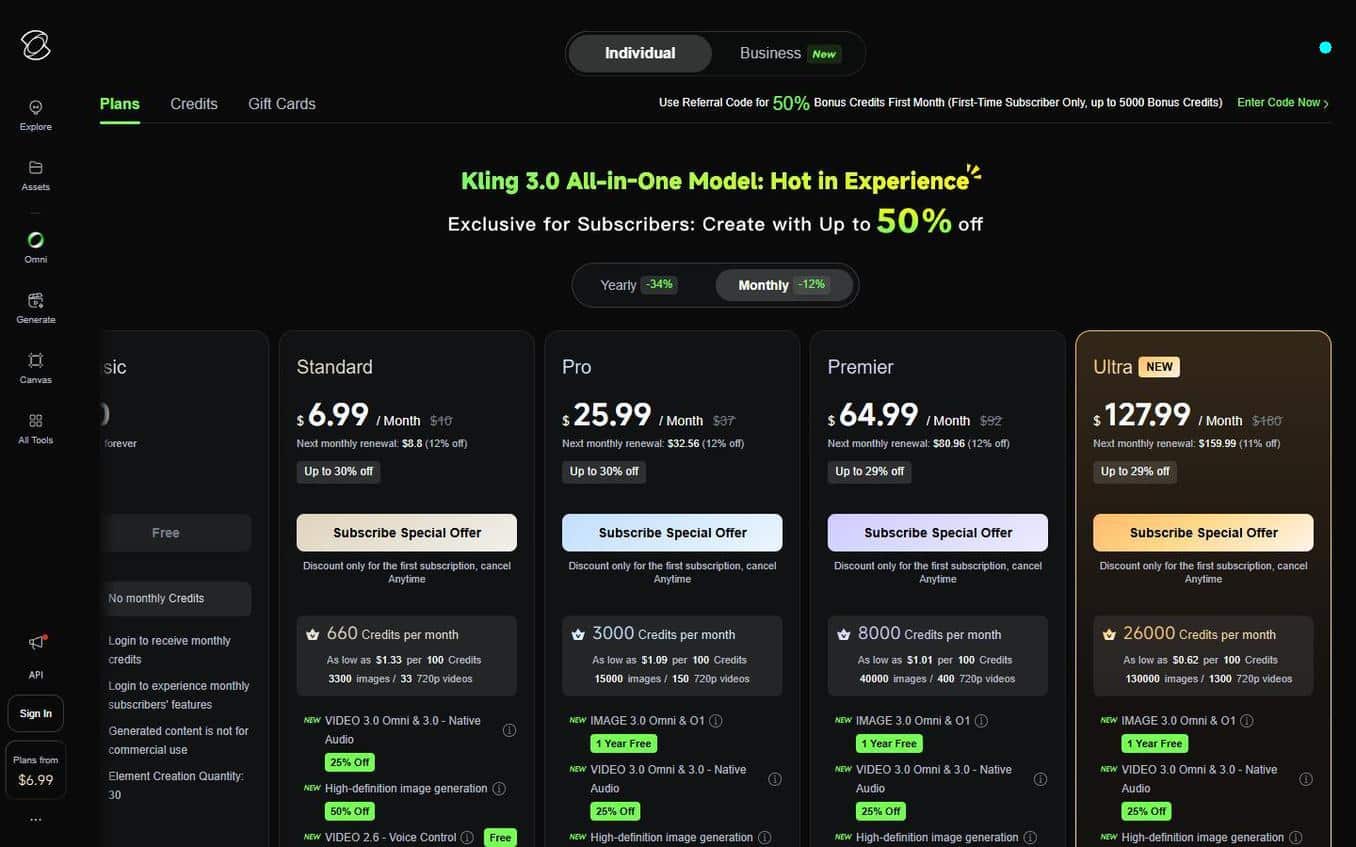

The paid tiers are straightforward. Standard at $6.99/month gets you 660 credits plus 1080p with no watermark, Pro at $25.99/month gives you 3,000 credits, and the Ultra tier at $59.99/month unlocks 8,000 credits and full Ultra HD output. That progression makes sense for how most people would actually adopt this kind of tool, starting free, moving up as they find real use for it, and only hitting the top tier when production volume actually justifies it. Crazy how that makes sense right … again I think some other companies could take note of this pricing model.

Quality and Character Consistency

The Ultra HD native 4K output is impressive, and the character consistency across multiple shots (which has historically been one of the most painful problems in AI video) is handled well enough that people are actually using Kling for multi-shot sequencing and storyboarding rather than just one-off clips. I can only imagine how wild youtube, and other video platforms are going to be once people master this. That is a meaningful shift in how the tool gets used in practice – versus how earlier models got used, which was mostly for single experimental clips. Chase Jarvis, a photographer and creative director whose opinion on visual quality tools carries actual weight, called Kling “likely the best general-purpose video model on the market right now,” and the broader external reviewer consensus lines up with that assessment in terms of motion quality and storytelling consistency. Native audio in five languages is a feature that matters more than it might seem for creators working on international content, and the integrated audio pipeline means you are working within a single tool rather than stitching things together across platforms. I really feel they have taken a lot of critical considerations and pain points from other similar platforms and resolved them.

Where Kling Shows Limits

The cost can get real fast if you are iterating heavily at higher quality levels, because credits go faster than you expect during a serious production session, and the render times are slower than some competitors. I don’t know if they’ve been focused on other features rather than speed optimization, but for creators doing rapid creative iteration that friction shows up in a real way.

The Head-to-Head Breakdown

Input Flexibility

Seedance wins this cleanly. Nine images, three video clips, three audio clips as simultaneous references is a fundamentally different capability than what Kling currently offers, and for creators who are working with brand assets, style references, or existing footage, that gap is meaningful in a very practical way.

Visual Quality

This one is close, and I don’t think you can make a clean call without testing both on your specific type of content. The external consensus generally puts Kling slightly ahead on human realism and naturalistic motion, while Seedance gets the edge on controlled complex action and physics-heavy scenes. The Silicon Review’s comparison captures it well, with Kling edging ahead for human realism and Seedance edging ahead for controlled complex action. That is actually a useful distinction for figuring out which one fits your project, because most production work is going to lean clearly one way or the other.

Motion Quality

Kling has a real edge for human motion realism. The way people walk, gesture, and interact with objects has a naturalistic quality that shows up repeatedly in comparison videos and in the community consensus among people who have tested both tools extensively. Seedance closes that gap significantly when the scene involves complex physical interactions or structural dynamics, where its physics modeling tends to produce more convincing results.

Audio Integration

This is where the community discussion has been pretty emphatic. I have seen multiple threads where people describe Seedance’s facial performance quality as being in a different category, with facial expressions that truly do not look AI-generated and audio sync that is noticeably ahead of Kling’s current implementation. That tracks with ByteDance’s history working with highly synchronized audio-visual content and with what the CNN coverage described about professional-level quality.

Pricing

If you are spending money, Kling’s structure is more transparent and more accessible at every tier. The free tier makes it really risk-free to start, which Seedance currently can’t match in terms of public access and onboarding simplicity. My push would be to always try free versions first – see if it meets your goals and build a plan around a free tier until you need to move up for whichever reason.

What Real Users Are Saying

The general consensus on both tools is largely positive as you can imagine, but with some specific caveats that should be known, because as things often aren’t that simple, the headline benchmarks do not always reflect the day-to-day experience when you are actually working through a full production session.

On Kling, the concern I have seen come up pretty often is a performance degradation pattern over the course of a session. One Reddit user described it clearly: “Kling gets worse the longer you use it in one session, around generation 15-20, quality drops.” That is not a dealbreaker for casual use but absolutely matters if you are doing high-volume production work and expecting consistent output throughout. Whether that is a caching issue, a server-side optimization issue, or something else isn’t entirely clear, but it is a real thing that multiple users have reported independently. Similar to other articles I have written – the context and consistency with AI is crucial, without it you start doubting output and end up having to double back.

On Seedance, the Reddit sentiment has been enthusiastic about the acting and expression quality. “Seedance’s acting feels on another level – facial expressions don’t look AI-generated at all, audio sync is far ahead of Kling” is representative of what power users are saying, and it lines up with the Forbes and CNN coverage in terms of where the model seems to be really pushing limits. The multimodal input system in particular gets praise from people who have workflow complexity that other tools can’t handle.

YouTube comparison channels have been running these tools side by side on matched prompts, and the results tend to show Kling winning on clean, realistic human scenes and Seedance winning on scenes that require complex physical interactions or heavy reference-matching, which is more or less exactly what you would predict from the architectural differences between the two models.

Here is Who Should Pick Which

Start With Kling 3.0 If:

You are newer to AI video and want to understand what the technology can actually do without spending anything upfront, the free tier is a real place to start and 66 credits per day is enough to get a genuine feel for whether this fits your production workflow. Because there is always a chance you will work through the initial excitement and find that AI video isn’t the right tool for your specific project, not spending money while you figure that out is worth something real.

Kling also makes more sense if you are doing primarily human-focused content (scenes with people talking, moving, emoting) where the realism advantage Kling currently holds will show up visibly in your final output. For teams and agencies doing high-volume output across a range of project types, the transparent credit-based pricing and the quality floor that Kling maintains across varied content types makes it the safer production choice overall.

Choose Seedance 2.0 If:

You are working with existing reference materials and want a tool that can actually incorporate all of that into its generation process rather than working from text descriptions alone, because the multimodal input architecture is really differentiated and becomes more valuable the more specific and complex your creative requirements get.

If your work involves physics-heavy scenes, complex structural interactions, or content where physical accuracy matters in a visible way, Seedance’s specific strength in that area is real and has been noticed by people whose judgment on visual quality I take seriously. Advanced creators who want directorial control over camera movement, lighting, and performance notes will find more of those tools baked into Seedance’s current interface.

That said, I don’t think the copyright situation is something to wave away for professional work. The Disney IP concerns were real, and the model’s training data transparency is not where I would want it to be for high-stakes commercial production. That caveat is worth factoring into any decision that involves using this for client work or commercially licensed content.

Where Both of These Are Headed

It is worth saying that both Seedance 2.0 and Kling 3.0 are moving fast (like most popular platforms), and the comparison that is accurate today will shift, probably sooner than you would expect. This space is wildly challenging and exciting because you need to keep up with the tools that you want to use. Kling has been iterating on its session performance issues and Seedance has been working on broadening its public availability, which means the friction points on both sides are likely to narrow over the course of 2026 – so if you are not ready to use them yet, then keep checking in over the coming months. Watch YouTube reviews and see how the video output progresses.

The bigger picture here is that two Chinese AI companies are producing tools that are actually competitive with anything the US market has built, and in some areas are ahead of it, which is a real change from where things stood even a year ago. I think that competitive pressure is ultimately good for everyone trying to make interesting video content without a Hollywood-scale budget (not that they put out anything great often) – and it is only going to get more intense through 2026.

For more context on where AI video fits relative to other creative tools, our overview of the best AI tools in 2026 has a broader look at the landscape. If you are specifically interested in how AI is changing creative production workflows, our coverage of AI tools for content creators goes into the adjacent tools worth knowing about. And if you have been looking at the text-to-video space with a wider lens, we covered the best AI video generators available right now with a broader field of competitors for context.

My final take on the Seedance 2.0 vs Kling 3.0 decision is to start with Kling, because the free tier is a real gift and the output quality is high enough that you will know quickly whether AI video belongs in your workflow, and then look seriously at Seedance once you have a clearer sense of what your specific production needs actually are, because the multimodal control and physics accuracy are actually impressive capabilities that become more relevant the more specific and demanding your creative requirements get.

Keep Reading

If you found this useful, I’d recommend checking out Best AI Video Generators in 2026 – The Full Breakdown and AI Tools for Content Creators – What is Actually Worth Using. We’re covering more tools like this regularly at StackBuilt and I’m trying to keep the focus on things that actually matter for people who are building real businesses – not just whatever is trending on Product Hunt that week.