Some links on this site are affiliate links, meaning we may earn a small commission at no extra cost to you if you click through and make a purchase. This does not influence our reviews or recommendations — we only suggest tools we genuinely believe in.

NVIDIA released Nemotron 3 Super on March 11, 2026, and it is worth paying close attention to, because it is one of the more technically interesting open-weight models to come out this year – a 120-billion-parameter model that only uses 12 billion of those parameters at inference time, built on a hybrid architecture that is unlike anything else currently available in the open-weight space.

The release landed alongside GTC 2026, where NVIDIA also announced the Nemotron Coalition, a broader push to rally AI labs around open frontier model families, and the combination of those two announcements makes this a genuinely significant moment for developers who care about running high-capability models on their own infrastructure.

This article breaks down what was actually released, what the benchmark numbers suggest in practice, how it stacks up against the alternatives, and what questions are still genuinely unanswered.

What Was Actually Announced

The core facts from the official NVIDIA developer blog:

- Model size: 120B total parameters, 12B active parameters at inference (mixture-of-experts routing)

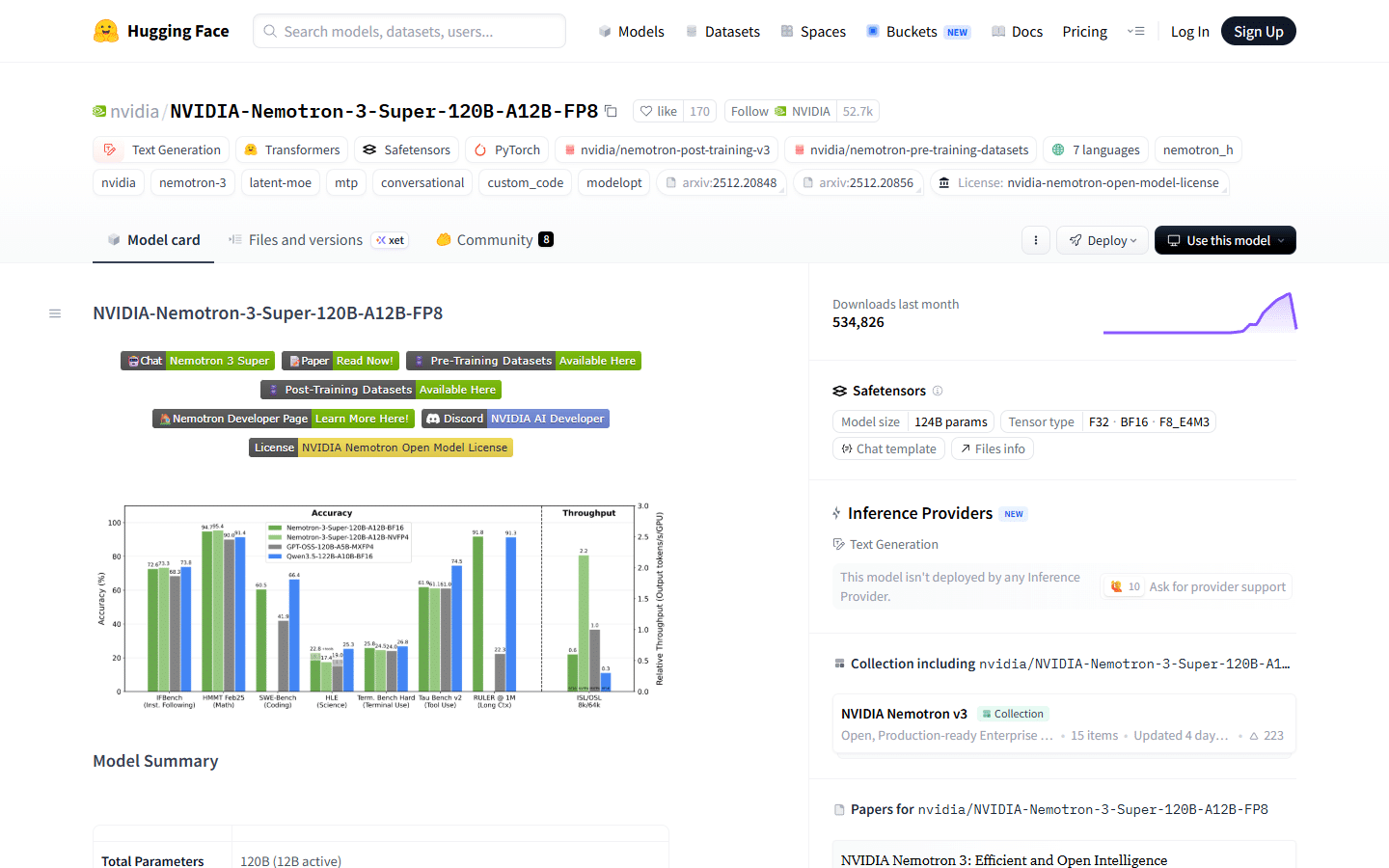

- Architecture: Hybrid Mamba-Transformer-MoE – Mamba-2 layers for sequence processing, Transformer attention layers for reasoning, and a “Latent MoE” that compresses token embeddings into a low-rank latent space before routing (allowing 4x more experts at the same compute cost)

- Context window: Native 1M-token context

- Throughput vs. comparables: 2.2x higher inference throughput than GPT-OSS-120B, and 7.5x higher throughput than Qwen3.5-122B, measured on 8K input / 64K output on NVIDIA B200 GPUs

- PinchBench (OpenClaw agent benchmark): 85.6%, the best open model score in its class at release

- Training: Pretrained on 25 trillion tokens (10 trillion unique curated tokens), post-trained with 40 million supervised and alignment samples, and reinforcement-learned across 21 environment configurations with over 1.2 million rollouts via NVIDIA NeMo Gym

- Precision: Pretrained natively in NVFP4, NVIDIA’s 4-bit floating-point format for Blackwell GPUs, delivering 4x faster inference than FP8 on Hopper with comparable accuracy

- License: NVIDIA Open Model License Agreement (updated October 2025) – commercial use permitted

- Access: Weights on Hugging Face, via NVIDIA NIM, and through hosted inference on Perplexity Pro, OpenRouter, build.nvidia.com, Fireworks AI, Together AI, Nebius, Modal, Lightning AI, Cloudflare Workers AI, Google Cloud Vertex AI, Oracle OCI, and CoreWeave

What This Means for Developers and Business Owners

The thing that makes Nemotron 3 Super genuinely interesting for developers is not the raw benchmark numbers but the specific combination of efficiency and context length that the architecture unlocks, because most teams running agentic workflows have run into at least one of two painful problems – the “thinking tax” (long chains of reasoning dramatically increase token costs and latency) or the “context explosion” (multi-step agents accumulate context until smaller models lose coherence or larger models become prohibitively expensive to run).

Nemotron 3 Super was, of course, built to address both of those things explicitly, and the 1M-token native context window is in practice the hardest spec to find elsewhere in the open-weight tier – GPT-OSS-120B’s effective context collapses at 1M tokens, scoring just 22.3% on RULER-1M versus Nemotron 3 Super’s 91.75%.

For business owners thinking about where this fits, it is worth noting that NVIDIA’s own deployment framing is telling: the suggested pattern is to use Nemotron 3 Nano (the 4B companion model released alongside Super) for routine individual agent steps, and route complex multi-step planning and reasoning to Super, while reserving proprietary frontier models for the most demanding expert-level tasks. That tiered approach – if you build it thoughtfully – could reduce both API costs and latency in production pipelines compared to routing everything through a single heavyweight model.

Real-world evaluations have reinforced this framing. Greptile’s code review evaluation found that Nemotron 3 Super returned a useful, multi-finding code review in 12.5 seconds with just two tool calls, surfacing three substantive bugs in a single pass – which is honestly impressive for a model at this size running on GPU cloud infrastructure rather than a closed frontier API.

CodeRabbit adopted it for their context-gathering and summarization stage in AI code review, specifically citing the 1M context window and multi-token prediction as the reasons for the upgrade from Nemotron 3 Nano.

How It Compares to the Alternatives

The open-weight model space at the 120B class is competitive right now, so here is an honest read of where Nemotron 3 Super sits relative to the other serious options, drawing from NVIDIA’s technical report and independent evaluations:

vs. GPT-OSS-120B (Meta’s Llama-based model): Nemotron 3 Super consistently outperforms or matches GPT-OSS-120B across agentic benchmarks, and the throughput gap (2.2x) is significant for production workloads – but the most decisive difference is long-context retention, where GPT-OSS-120B’s 22.3% RULER-1M score versus Nemotron’s 91.75% is not close. Nemotron also scores notably higher on SWE-Bench Multilingual (45.78% vs. 30.80%), which is a genuine differentiator for teams working across non-English codebases.

vs. Qwen3.5-122B-A10B: This is a more competitive comparison, and in practice Qwen3.5 leads Nemotron 3 Super on several benchmarks – MMLU-Pro (86.70% vs. 83.73%), GPQA scientific reasoning (86.60% vs. 79.23%), SWE-Bench Verified (66.40% vs. 60.47%), and most TauBench tool-use tasks. Nemotron 3 Super leads on LiveCodeBench (81.19% vs. 78.93%), HMMT math with tools (94.73% vs. 89.55%), and crucially on throughput (7.5x faster inference) and long-context tasks that exceed Qwen3.5’s 128K hard cap. I think the honest framing is: if you need a single model that performs well across both agentic workflows and general knowledge tasks, Qwen3.5 is more versatile; if you are building a multi-agent orchestration stack on NVIDIA hardware and throughput matters, Super’s profile is more attractive.

vs. DeepSeek V3: DeepSeek V3.2 competes at 685B total parameters (37B active) with an MIT license and a broader general-purpose profile. Nemotron 3 Super does not match DeepSeek’s raw intelligence scores across general benchmarks, but it is a fraction of the size, runs efficiently on NVIDIA Blackwell infrastructure, and has a permissive commercial license that doesn’t carry the data-sovereignty concerns some enterprises have flagged with DeepSeek’s Chinese origins.

vs. Llama 3.3 / Mistral: Nemotron 3 Super is in a higher size class than Llama 3.3 70B or Mistral Large, and it generally outperforms both on complex reasoning and agentic benchmarks – though for teams that just need a solid 70B-class model for standard tasks, the hardware requirements for a 120B model are meaningfully higher (A100/H100 or cloud GPU instances, per MindStudio’s deployment guide).

One thing worth noting from Qodo’s independent evaluation: Nemotron 3 Super achieved the highest precision of any open model in their code review benchmark at 73.4%, beating Qwen3.5-397B (66.4%) and GPT-OSS-120B (46.9%) – precision matters more than recall in code review because false positives erode developer trust.

What We Are Still Waiting to See

The benchmarks at launch are NVIDIA’s own (or benchmarks where NVIDIA chose the harness), and a few things remain genuinely unclear from the available evidence. The SWE-Bench comparison between Nemotron and Qwen3.5 uses different agent scaffolds – OpenHands vs. SWE-Agent – and those differences are not trivial, so the 6-point gap between 60.47% and 66.40% should not be taken as a clean head-to-head. Broader independent evaluation on real production workloads is still sparse, and the community reception on r/LocalLLaMA has been cautiously positive but not as enthusiastic as you might expect for a model with these specs – in part because the NVFP4 format requires NVIDIA Blackwell hardware to fully realize the inference speed benefits, which limits who can actually take advantage of the architecture’s most impressive numbers right now.

There are also broader questions about what NVIDIA is building toward here, because the Nemotron Coalition announcement at GTC 2026 suggests this is not just a model release but a positioning play to make NVIDIA’s hardware stack the natural home for open-weight model development, and whether that succeeds depends on ecosystem adoption that is still early.

It is also worth watching what happens with the Nemotron 3 Ultra (expected to follow Super), as well as how the reinforcement fine-tuning capability coming to Amazon Bedrock for Nemotron models develops over the next few months.

The Bottom Line

Nemotron 3 Super is a genuinely well-engineered model that solves a real set of problems in multi-agent AI development, particularly around throughput and long-context retention, and it does so with an architecture that is novel enough to be worth studying even if you are not going to deploy it today.

It is not the strongest open-weight model across every benchmark – Qwen3.5 leads on several dimensions, and DeepSeek models carry more raw general intelligence – but for teams building agentic pipelines on NVIDIA infrastructure who need a model they can self-host, fine-tune on proprietary data, and run at scale without per-token API costs, the combination of open weights, 1M-token context, and Blackwell-native efficiency makes it one of the more compelling options in its class.

As the broader AI infrastructure layer continues to mature around models like this, the gap between frontier proprietary APIs and what you can run on your own hardware is narrowing in ways that matter for the broader AI ecosystem, and Nemotron 3 Super is a good example of that trend.