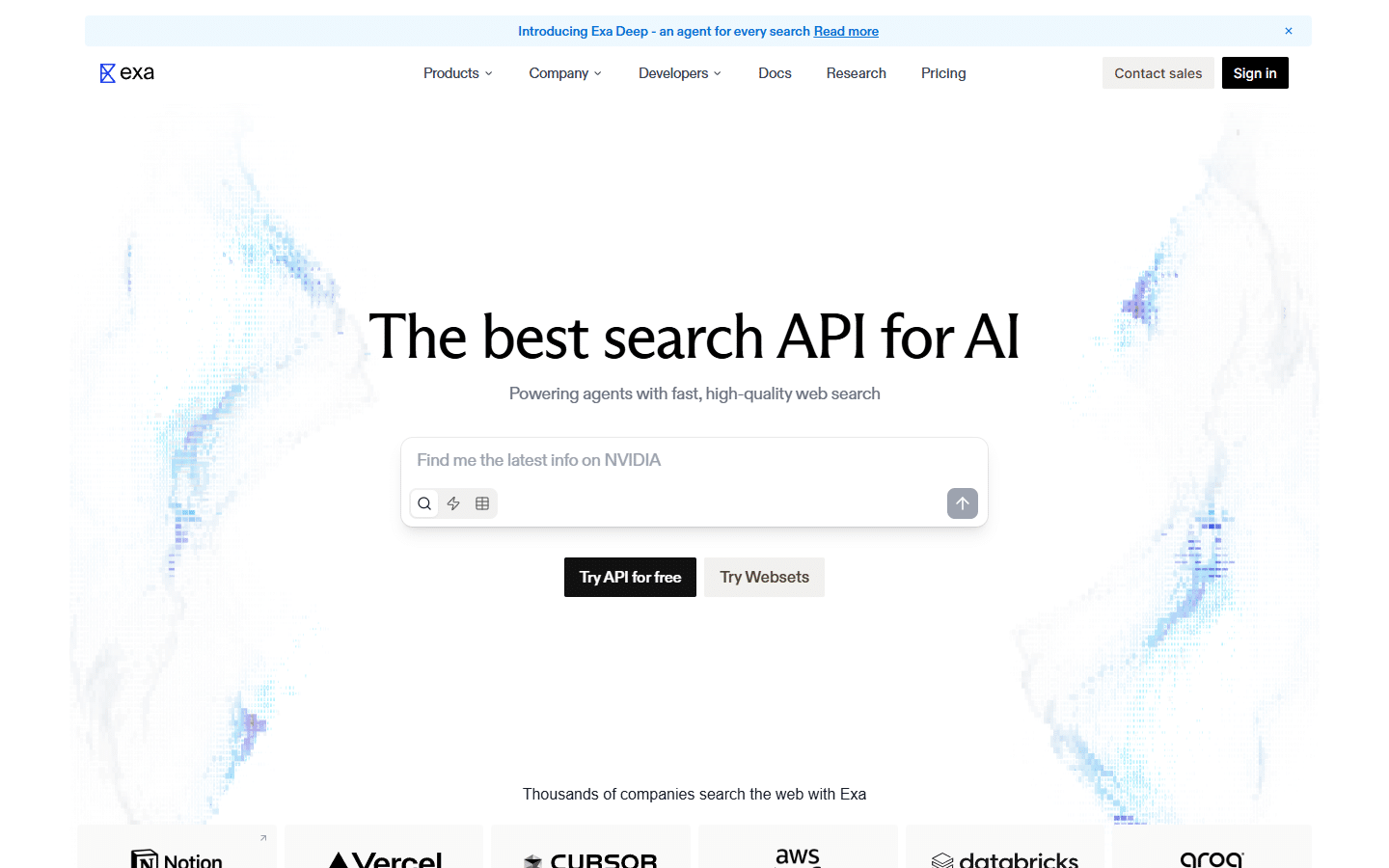

I had been hearing the name Exa AI pop up in developer circles and other blogs that I surf for a while now, it really has not seemed like the kind of tool that gets a lot of mainstream attention, and most people who use the AI products it powers have absolutely no idea it exists (actually a sign of a really great product). That is actually the whole point. Exa is not trying to be the next Google, and it is not trying to be a consumer product you use every morning to check the news – it is the search infrastructure that sits underneath AI agents, RAG pipelines, and enterprise workflows, quietly doing the work that makes those systems useful in the first place. I love infrastructure plays – this is why I love ISO compliant blockchains but I digress.

If you have ever used an AI tool that could search the web and give you a grounded, accurate answer – rather than just hallucinating something plausible – there is a reasonable chance Exa was involved somewhere in that chain. That includes products built on platforms like StackAI, and it includes applications built with the Vercel AI SDK, which is why Guillermo Rauch, the CEO of Vercel, described Exa as “Perplexity-as-a-service – the infrastructure to ground your AI products on real world data.” That quote is worth sitting with for a second, because Rauch isn’t someone who throws endorsements around casually, and it captures something real about what Exa actually is.

Here is Why Exa AI Matters Even If You Are Not a Developer

The thing that a lot of people miss when they hear “search API” is that they picture something technical and niche, something only relevant if you write code for a living, but the reach of what Exa does extends much further than that, because AI agents are increasingly doing things that affect everyone – writing reports, researching candidates, analyzing competitors, building lead lists, summarizing financial filings – and the quality of all of that work depends almost entirely on the quality of the data those agents can retrieve.

Traditional search engines were built for humans, and that design choice shows in everything about them. They optimize for SEO signals, they return pages with ads and navigation clutter mixed in, they handle keyword queries well but fall apart when the question is nuanced or conceptual, and they were never designed to be called ten times per second by an AI agent trying to build a comprehensive research brief. Google is brilliant for humans typing something into a box and reading a list of links, but it is genuinely bad infrastructure for the way AI works now.

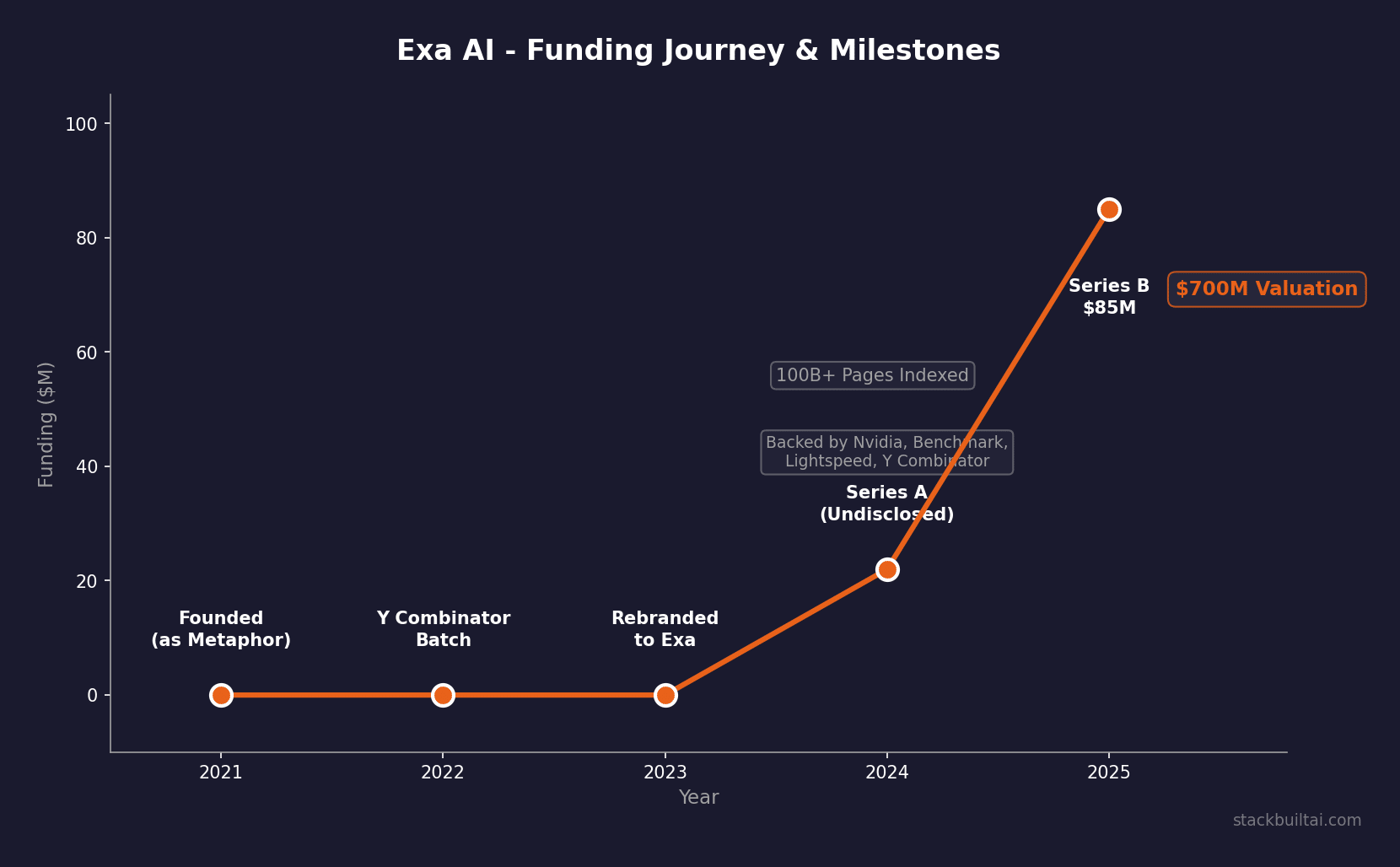

Exa was founded specifically to fix that mismatch. Founded in 2021 and backed by over $100M from Benchmark, Lightspeed, Nvidia, and Y Combinator – including an $85M Series B in September 2025 that valued the company at $700M – the company built its own search index from scratch, covering hundreds of billions of webpages, and trained neural models specifically to understand how humans connect ideas rather than just which words appear together on a page.

What Exa AI Actually Does Differently

The core technical difference comes down to how the index is built and queried, and it is genuinely not just marketing language – it is a real architectural distinction that produces meaningfully different results.

Traditional keyword search (the kind Google runs on at its core) works by building inverted indexes – essentially a giant map from words to the pages that contain them – and then ranking results based on things like how often the word appears, how authoritative the linking sites are, and a long list of other signals that have over time been heavily influenced by SEO manipulation. The result is a system that is very good at matching words but not at matching meaning.

Exa’s approach is different. Instead of indexing keywords, Exa trains specialized transformer models to process each document into embeddings – high-dimensional vectors that capture semantic meaning. When an AI agent sends a query to Exa, the system matches the meaning of the query against the meaning of indexed documents, not just the words. So a query like “Series B fintech companies in Singapore with 50-200 employees” does not return pages that happen to contain those words in combination – it returns the actual companies that match the semantic description.

That distinction matters enormously for AI agents, which tend to ask questions the way a researcher would rather than the way a search bar was designed to handle. The training data for Exa’s neural ranking was link prediction – essentially teaching the model to understand the patterns of how humans naturally connect ideas and references on the web – which means it has an intuition for what a given page is actually about, not just what words it uses.

And crucially, Exa doesn’t wrap Google or Bing. It maintains its own search index covering hundreds of billions of webpages, powered by a GPU cluster they call the “Exacluster” – 144 H200 GPUs. That is what makes things like zero data retention (ZDR) possible for enterprise customers, because when you build your own infrastructure end-to-end, you actually control what gets stored. A search API that wraps Google can’t honestly offer that.

The Two Products

Exa’s product line is organized around two main things, which are quite different from each other even though they share the same underlying search infrastructure.

The Exa API

The API is aimed at developers and AI teams building agents, pipelines, and applications that need access to the web. There are four main endpoints. The basic Search endpoint returns URLs and optional full page content – this is what most agent tool calls use, and it handles the read speed that agentic workflows need. There is also an Answer endpoint that returns a synthesized response to a direct question, similar to what you might get from Perplexity but designed to be called programmatically. The Research endpoint is for deeper multi-step queries that involve actual agentic behavior on Exa’s end, gathering and reasoning across multiple sources before returning results.

The API supports a really impressive set of filters – category filters that let you restrict results to companies, people, news, tweets, research papers, personal sites, or financial reports, along with includeText and excludeText parameters for content matching, ISO 8601 date ranges on both publish and crawl dates, domain allowlists and blocklists (up to 1,200 domains each, compared to Tavily’s cap of 300), and livecrawl control via maxAgeHours. It integrates natively with LangChain, LlamaIndex, CrewAI, the Vercel AI SDK, Google ADK, Composio, n8n, Zapier, and about 20 other frameworks.

Websets

Websets is a different product aimed at a different buyer – closer to a business intelligence or sales intelligence tool than a developer API, though it has an API as well. The idea is that you describe what you want to find – “VP of Engineering at mid-market healthcare companies” or “bootstrapped B2B SaaS companies with 10-50 employees that recently hired a sales lead” – and Exa’s agentic system goes out, finds and validates every result that matches, and returns it as a structured, enrichable matrix, like a spreadsheet populated by AI. You can add columns for enrichment (LinkedIn profile, revenue estimates, recent news) and the system builds out each row using the same search infrastructure underneath.

It is not fast – Exa’s own explainer suggests you might want to go get a cup of coffee while it runs – but the comprehensiveness is the point. This is what the company calls a “scaling law for search”: the more compute you apply to a query, the more complete and verified the results become. That makes Websets well suited for use cases where you would rather wait fifteen minutes and get a near-perfect list than get a fast but patchy one.

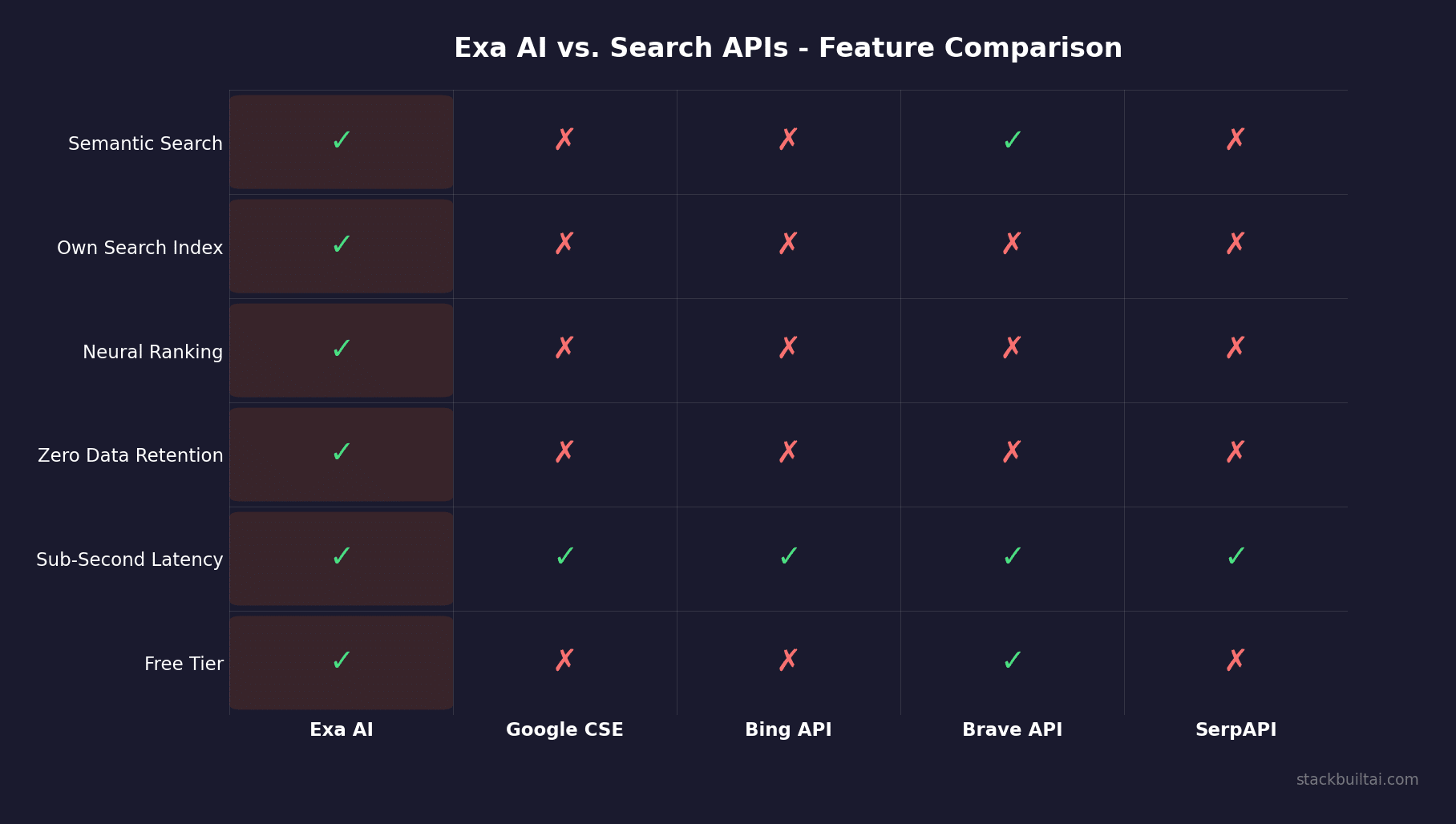

Here is What Makes Exa Better Than the Alternatives

The most commonly cited alternative in the developer community is Tavily, which was acquired by Nebius in February 2026 and is a solid, well-regarded search API with a simpler setup. The comparison is worth actually digging into rather than just taking Exa’s marketing at face value, and I will of course note that the benchmark data I am referencing comes from a Fortune 100 enterprise evaluation that Exa cites heavily on their comparison page, so there is at least some self-selection in what gets highlighted.

That said, the numbers are not trivial differences. On the WebWalker benchmark – a complex multi-hop web retrieval task evaluated independently – Exa scored 81% accuracy versus Tavily’s 71%. On the multilingual MKQA benchmark, Exa hit 70% versus Tavily’s 63%. The latency gap is substantial too: p95 response time at 1.4-1.7 seconds for Exa versus 3.8-4.5 seconds for Tavily on the same queries, with Exa’s Instant mode coming in under 200 milliseconds (Tavily’s ultra-fast mode claims 90ms but reportedly measures 210ms on typical queries and 420ms on longer ones).

The structural differences are meaningful beyond just speed and accuracy. Exa has its own search index; Tavily doesn’t, and that matters for customization, ZDR, and result quality on queries that fall outside mainstream web content. Exa supports up to 100+ results per query versus Tavily’s cap of 20, has seven content category filters versus Tavily’s three (general, news, finance), and includes specialized people search and company search indexes that Tavily simply does not have. Exa also has neural/semantic search while Tavily relies on keyword ranking. Now of course there are cases where Tavily’s simpler, lower-overhead setup is the right call – if you want fast defaults and minimal configuration, it is really easier to drop in – but for complex or production-scale AI applications, the trade-offs are becoming clearer in Exa’s favor.

Who Is Using Exa AI Right Now

The customer base is largely enterprise and developer-focused, which is consistent with where Exa has positioned itself. Cursor – the AI code editor that has become arguably the most talked-about developer tool of the past two years – uses Exa for search. Lovable, which has had extraordinary growth as a UI builder, runs on it. StackAI, which builds enterprise AI agent platforms for consulting firms and private equity shops, integrated Exa’s Search, Answer, and Websets APIs into its platform in under two weeks and now exposes it as a first-class node in their visual agent builder. OpenRouter, which aggregates over 400 large language models and routes requests between them, uses Exa as its search infrastructure.

What is interesting about the enterprise use cases is how specific they get. Top private equity and consulting firms are running multi-step research agents on Exa to do the kind of background research that would have taken a junior analyst a full day – financial data, company comparisons, market sizing, recent news. Recruiting teams are using Websets to find candidates matching very specific skill and experience combinations across Exa’s 1 billion+ indexed LinkedIn profiles with 50 million+ updates per week. Sales teams (including, apparently, Exa’s own team, which runs its outbound entirely on Websets) are using it for lead enrichment and account research.

The company went from about 7 employees to roughly 25-28 within the span of a year or so, and they report that approximately 95% of their growth has been inbound, which is either a testament to product-market fit or to the fact that developers who find a search API that works well tend to stick with it. I don’t know if they have been focused on outbound channels at all, but given that they are apparently running their own SDR process on their own product, it seems like a deliberate choice either way.

Pricing Breakdown

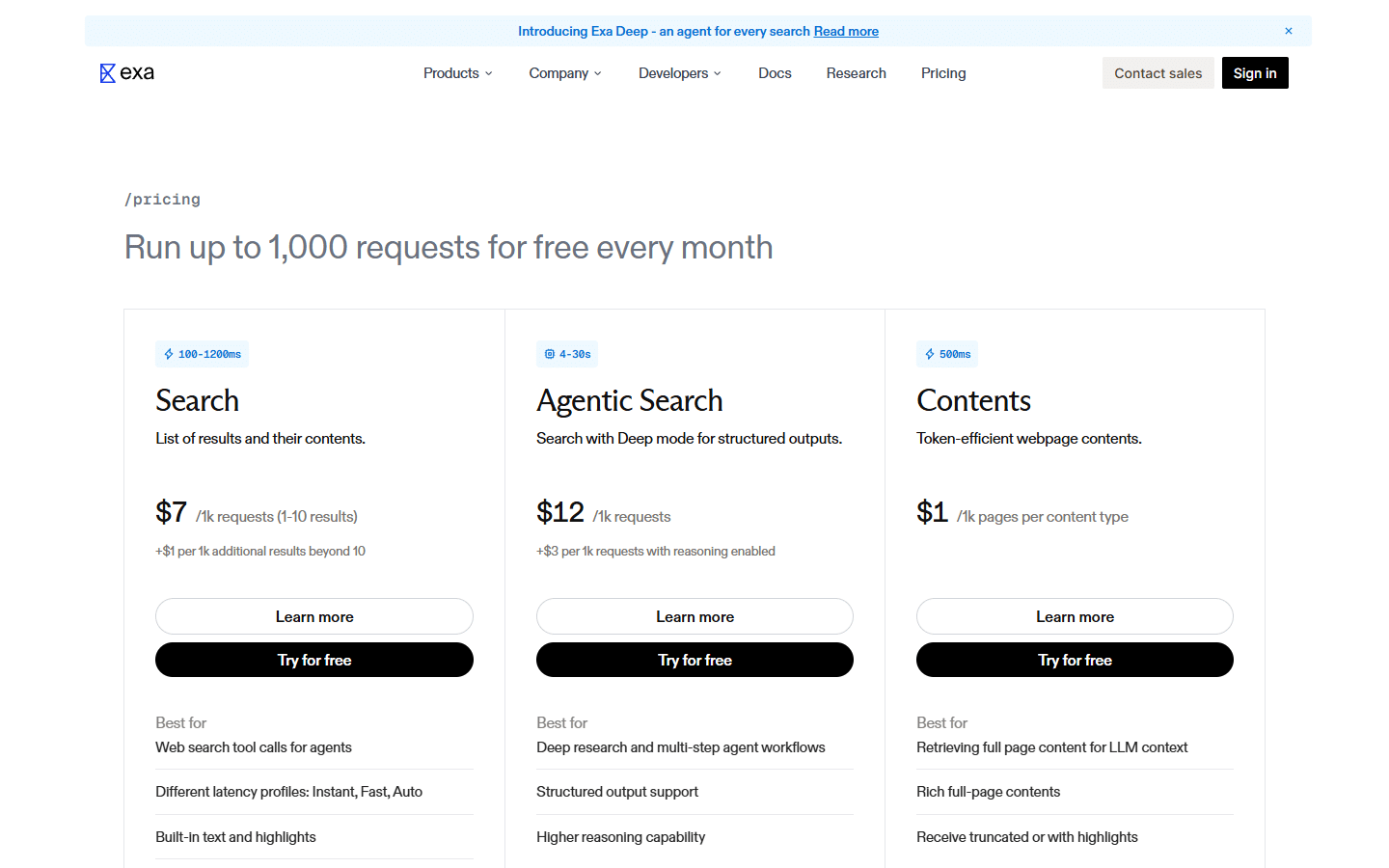

The pricing structure is usage-based, which is the right model for an API that serves such a wide range of query volumes, and it is actually more accessible than you might expect for smaller teams.

The free tier gives you 1,000 requests per month across all products, which is enough to build and test most integrations without spending anything. There is also a startup and education grant program that provides $1,000 in free credits for teams that are building or launching.

For paid usage, the main Search endpoint runs at $7 per 1,000 requests, with the base including 10 results per query, plus $1 per 1,000 additional results beyond that. Summaries add another $1 per 1,000 on top. Agentic Search (the deeper, multi-step variant) runs $12 per 1,000 requests, with reasoning enabled adding $3 per 1,000. Contents – full page retrieval – is $1 per 1,000 pages per content type. The Answer endpoint is $5 per 1,000 answers. Research pricing breaks down into agent search operations at $5, page reads at $5 (or $10 for the pro variant where a page equals 1,000 tokens of content), and reasoning tokens at $5 per million.

For context: if you are running an AI agent that makes 5,000 search calls per month – which is actually quite a lot for most small or mid-size applications – you are looking at roughly $35 in search costs. That is a number that fits comfortably inside almost any AI product’s unit economics. The pricing only starts to feel significant at high enterprise scale, which is exactly when you would be negotiating custom rates anyway.

Enterprise plans include custom pricing, volume discounts, up to 1,000 results per search, custom rate limits, tailored moderation, SLAs, one-on-one onboarding, and zero data retention as a standard offering rather than an add-on.

Here is Where Exa Fits in the AI Stack

The framing that makes the most sense to me is to think of Exa as infrastructure rather than as a product, and I think that matters more than people realize when thinking about where AI is headed. Consumer AI products – the Perplexities and the ChatGPTs – get all the attention and the valuation headlines, but the infrastructure layer underneath them is what determines whether those products can actually deliver on their promises over time.

Exa sits at the grounding layer of the AI stack, which is the part responsible for connecting LLMs to the real world as it currently exists. LLMs are trained on data with a cutoff date, they hallucinate with varying frequency depending on the task, and they have no inherent ability to access information that was published last week. Search grounding – giving an agent access to a live, accurate, semantically-aware view of the web – is how you fix all three of those problems at once. And the quality of that fix depends almost entirely on the quality of the search infrastructure underneath it.

What makes this interesting from a strategic perspective is that the infrastructure layer tends to be incredibly sticky once it is embedded into production systems. A company that integrates Exa into its AI agent architecture is not likely to rip that out and replace it with something else unless there is a compelling reason, because the integration cost is real and the search infrastructure is one of the parts that just tends to work quietly in the background. Exa seems to understand this – the zero data retention, the SOC2 compliance, the enterprise SLAs – all of that is oriented toward making Exa the kind of vendor a compliance-sensitive enterprise can commit to long-term.

The company’s goal, stated pretty plainly on their about page, is “perfect search” – highly controllable, unbiased, and comprehensive enough that AI systems can use it to organize and access essentially all of the world’s information. That is a long-horizon ambition, not a next-quarter roadmap item, and it positions Exa as a foundational infrastructure bet rather than a feature.

A Measured Take on Where This Is All Going

Exa is not a perfect product – yet. The Websets tool is powerful but slow by design, and not every use case can accommodate that trade-off. The pricing, while reasonable for most teams, adds up quickly at enterprise search volumes, which is why the custom tier exists. And the competitive landscape for AI search APIs isn’t static – Tavily got acquired and is presumably getting resourced, Perplexity has its own API, and newer entrants like Parallel are showing strong results on some benchmarks where Exa does not necessarily lead.

That said, what Exa has built – a purpose-built search engine with its own index, neural semantic ranking, and the kind of developer ecosystem that now includes LangChain, Vercel, Cursor, and OpenRouter as native integrations – is really difficult to replicate quickly, because there is always a chance that a well-funded competitor could build something competitive, but the time it takes to train models specifically for link prediction across hundreds of billions of documents and build the infrastructure to serve them at sub-200ms latency is measured in years, not months.

If you are building AI agents or AI-powered products and you are currently using a search API as a tool call within those agents, Exa is worth a serious evaluation, because the accuracy and latency improvements are real and the free tier makes the initial test cost nothing. If you are in sales, recruiting, or market research and you have been curious about what AI-powered prospecting lists actually look like in practice, Websets is one of the more interesting things to come out of the AI tool explosion of the past two years – slower than you might want, but more thorough than most alternatives.

And if you are just someone trying to understand why some AI tools seem to actually know things while others make things up, a good part of the answer is the search infrastructure underneath them, and Exa is one of the companies doing the most to make that infrastructure worth having.

Keep Reading

If you found this useful, I’d recommend checking out What Is a RAG Pipeline and Why Does It Matter for AI Quality? and The Best AI Agent Builders in 2026 – Compared. We’re covering more tools like this regularly at StackBuilt and I’m trying to keep the focus on things that actually matter for people who are building real businesses – not just whatever is trending on Product Hunt that week.